Hello,

I’m interested to know if anyone has ever tested Microsoft Azure text to speech and whether the generated audio is lip-synchronised.

Thanks

Oliver

Hello,

I’m interested to know if anyone has ever tested Microsoft Azure text to speech and whether the generated audio is lip-synchronised.

Thanks

Oliver

Sooo…

To answer my own question. The TTS via MS Azure is working well. Unfortunately is after translation in another language the timecode not the same and it will differ a bit at every single subtitle. At the end of a 15 min video is the sum of the time shift then some minutes.

Unfortunately, manual adjustment of the subtitles and re-export to an SRT file does not work. The timestamp is not adapted to the manual change. Would it be possible to change this? So that after manually moving the subtitles, the new timestamps are also retained?

Hi Oliver,

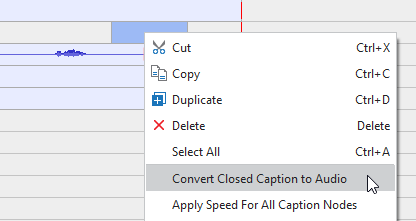

After manually adjust the subtitles, you should right-click a closed caption object in the Timeline pane > select Convert Closed Caption to Audio.

By doing so, the audio will be re-generated to match the closed caption timestamp.

Hope it helps.

Regards,

Dear PhuongThuy,

Thanks for the answer. This is the way I already did and what I described in my second posting. I guess this is not a problem from Active Presenter but Microsoft Azure. When I adjust the subtitles and export them into a new SRT file, the new timestamp is visible in the SRT by open with Notepad. But when this file is send to MS Azure the audio which come back include some new pauses which I removed. So AP is doing a great job ![]() , but MS not…

, but MS not… ![]() I found also no setting at MS page to adjust the output.

I found also no setting at MS page to adjust the output.